It is important to make your content searchable because this is how you will attract more users who are relevant to viewing your content. The process of doing this is known as search engine optimization (SEO), and it can lead to more interested users visiting your website. If Google Search has trouble understanding your page, you may be passing up a significant source of traffic as a result.

Discover how Google perceives the content of your website.

To get started, you should run the Mobile-Friendly Test on your website to see how Googlebot interprets your website. The web crawling bot known as Googlebot is responsible for adding new and updated pages to the Google index once they are discovered. Visit the page How Google Search Works for more details on the procedure.

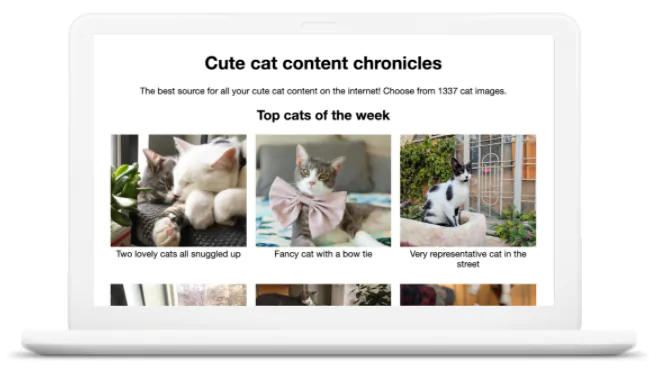

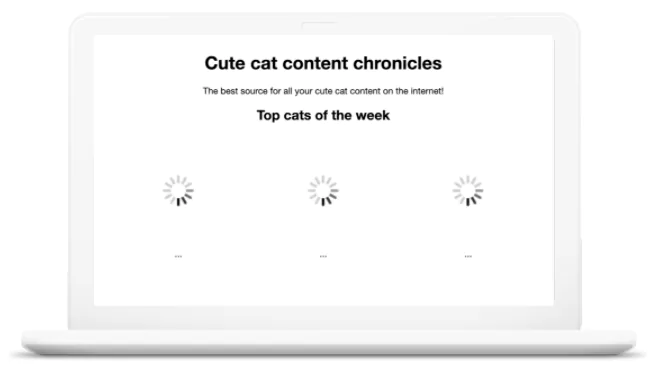

It is possible that you will be surprised to learn that Googlebot does not always see everything that users see in their browsers. Because the page makes use of a JavaScript feature that isn’t supported by Googlebot, the following illustration demonstrates that Googlebot is unaware that there are images on the page.

Here’s how a user views the page, the perspective from which a user sees the page in the browser, both the images and the text can be viewed.

The following is Googlebot’s interpretation of the page. As JavaScript isn’t supported by Googlebot, so Googlebot’s cannot see the images on this page.

Make sure that your links are correct.

Through the use of links, sitemaps, and redirects, Googlebot is able to move from one URL to another. Every URL on your site is analysed by Googlebot as though it were the very first URL it has ever seen from your website. In order to ensure that Googlebot is able to locate all of the URLs on your site:

- Utilize the <a href> tag along with a working URL. Make sure that every page on the site can be accessed via a link from another page that can be located using the search function. Make it a point to check that the page that is serving as a referral has either text or, in the case of images, an alt attribute that contains information that is pertinent to the page that will be visited. Tags that have a href attribute are what make links crawlable.

- Create a sitemap and upload it to increase Googlebot’s intelligence while it crawls your website. A sitemap is a file in which information about your website’s pages, videos, and other files, as well as the relationships between those files, is provided.

- If your JavaScript app only has one HTML page, you need to ensure that each screen and piece of individual content has its own URL.

Examine your current approach to using JavaScript.

Crawlers, such as Googlebot, do run JavaScript; however, there are some differences and limitations that you need to take into account when designing your web pages and applications to accommodate how crawlers access and render your content. These differences and limitations include: Find out more about the fundamentals of Search Engine Optimization for JavaScript or how to resolve issues that are related to JavaScript and Search.

Make sure Google is kept up to date whenever the content changes.

To ensure that Google quickly discovers any new or updated pages on your website:

- Submit sitemaps.

- Send Google a request to recrawl your URLs.

- When appropriate, make use of the Indexing API.

Check the error logs on your server if you are still having problems getting your page indexed.

Write the words on the pages

The only kind of content that Googlebot can find is text-based content. Googlebot cannot see text that is embedded in videos, for instance. In order to ensure that Google Search is aware of the subject matter of your page:

- Make sure that the meaning of your visual content can be understood from the text. One example of a suboptimal product category page is one that simply lists images of shirts without providing any additional textual context for the images. On the page devoted to the product category, there ought to be some textual explanation for each image.

- Check to see that each page has a title and meta description that are both descriptive. You can increase the amount of search traffic that you receive by using unique titles and meta descriptions on your web pages. This helps Google show how relevant your pages are to users.

- Use semantic HTML. Googlebot does not index content that is rendered in a canvas or that requires plugins such as Java or Silverlight. However, it does index content that is in HTML, PDF, images, and videos. Use semantic HTML markup for your content whenever it is possible, rather than relying on a plugin.

Inform Google of the different iterations of your content.

The fact that your website or its content exists in multiple iterations is not something that is automatically obvious to Googlebot. For instance, mobile and desktop versions of your site, as well as international variants of your website. You can do the following to ensure that Google serves users the correct version of the page:

- Consolidate duplicate URLs.

- Notify Google about any regionalized versions of your website.

- Improve the discoverability of your AMP pages.

Manage the kinds of content that Google sees.

There are a few different ways to avoid being crawled by Googlebot:

- Only logged-in users should be allowed access to your content if you want to prevent Googlebot from discovering your page (for example, use a login page or password-protect your page).

- By putting together a robots.txt file, you can prevent Googlebot from crawling your website.

- By adding a noindex tag, you can prevent Googlebot from indexing your page while still allowing it to be crawled.

Take the following steps if you want your content to be visible in Google Search but it isn’t currently visible there:

- Use the URL inspection tool to determine whether or not Googlebot is able to access the page.

- Check the robots.txt file to see if you are accidentally preventing Googlebot from crawling your website.

- Check your HTML for any noindex rules that may be hiding in the meta tags.

Make sure that your website can produce rich results.

Rich results can include styling, images, or other interactive features that can help your site stand out more in search results. Rich results can also help your site stand out more in social media results. You can assist Google in better understanding your page and displaying rich results for it in Search by providing explicit clues about the meaning of a page with structured data that is included on the page itself.